•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

DeepSeek V4 was released on April 24, with pricing that undercuts major competitors while the company says its models deliver stronger performance and improved agent capabilities. The launch comes one day after OpenAI unveiled GPT-5.5, highlighting a sharp divergence in pricing strategy between the two offerings.

DeepSeek officially released and opened the source for a preview of the new DeepSeek V4 line under the MIT license. The company offers two versions: V4-Pro and V4-Flash. Under the MIT license, developers can download, run locally, and modify the source code.

In API pricing, DeepSeek V4-Pro is listed at 3.48 USD per one million tokens. The article notes this is about one-eighth of GPT-5.5’s output price of 30 USD per one million tokens.

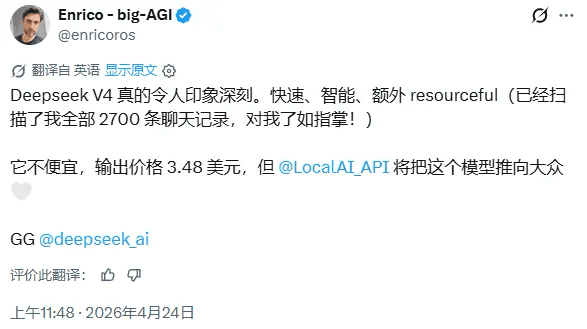

A developer identified as Enrico described V4 as “truly impressive, fast and smart,” while adding that the 3.48 USD price is not “cheap,” though he expects LocalAI to help broaden adoption.

DeepSeek positions V4-Pro as its leading model, aiming to compete with top commercially closed-source systems. The company claims that in benchmarks for mathematics, engineering, and programming, V4-Pro outperforms all open-source models currently.

On global knowledge, V4-Pro is described as far ahead of other open-source models, trailing only Google’s Gemini Pro 3.1. The article also states that V4-Pro’s agent capability has been significantly improved versus the previous generation, reaching the highest level among open-source models today.

V4-Flash is positioned as lighter, faster, and cheaper than V4-Pro. DeepSeek says Flash has slightly lower global knowledge but equivalent depth of reasoning. Because of its smaller parameter size and activations, the article says Flash’s API service has advantages in speed and cost.

In agent assessments, V4-Flash is reported to perform on par with V4-Pro for simpler tasks, but to lag on harder ones. DeepSeek frames this as making Flash more suitable for enterprise use cases that are sensitive to latency and cost, with medium task complexity.

Both V4-Pro and V4-Flash support a 1-million-token context window. At the API level, the article says both models have a maximum context length of 1 million tokens and support both non-reasoning and deep-reasoning modes.

DeepSeek recommends enabling deep reasoning mode and setting intensity to maximum for complex agent scenarios.

The company also says the V4 line has been adapted and optimized for official agent products including Claude Code, OpenClaw, OpenCode, and CodeBuddy, with improved performance in code-related tasks and document generation.

A key question raised by the release is which chip was used to train the models. Huawei confirmed that its latest AI computing cluster, running on Ascend processors, can support the DeepSeek V4 model. However, the article notes it remains unclear how widely Huawei’s chips were used in training compared with NVIDIA chips.

DeepSeek also said that due to limited supply of high-end compute, current throughput for the Pro version is very limited. The article adds that DeepSeek expects the Pro version’s price to drop significantly in the second half of next year after Huawei Ascend 950 enters mass production.

The article frames the pricing gap—DeepSeek V4-Pro at 3.48 USD per one million tokens versus GPT-5.5 at 30 USD per one million tokens—as forcing AI product companies to reassess profitability models as the cost threshold for AI intelligence declines.

Premium gym chains are entering a “golden era” that is ending or already in decline, as rising operating costs collide with shifting consumer preferences toward more flexible, community-based ways to exercise. Long-term memberships are shrinking, margins are pressured by higher rents and facility expenses, and competition from smaller, more personalized…