•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

Three separate changes to Anthropic’s Claude Code—made between March 4 and April 20—triggered measurable declines in reasoning behavior, higher token usage, and reduced response quality, according to the article’s account. While some issues were detected by developers early, acknowledgment and corrective actions took weeks, and users reported impacts that were not formally addressed until later.

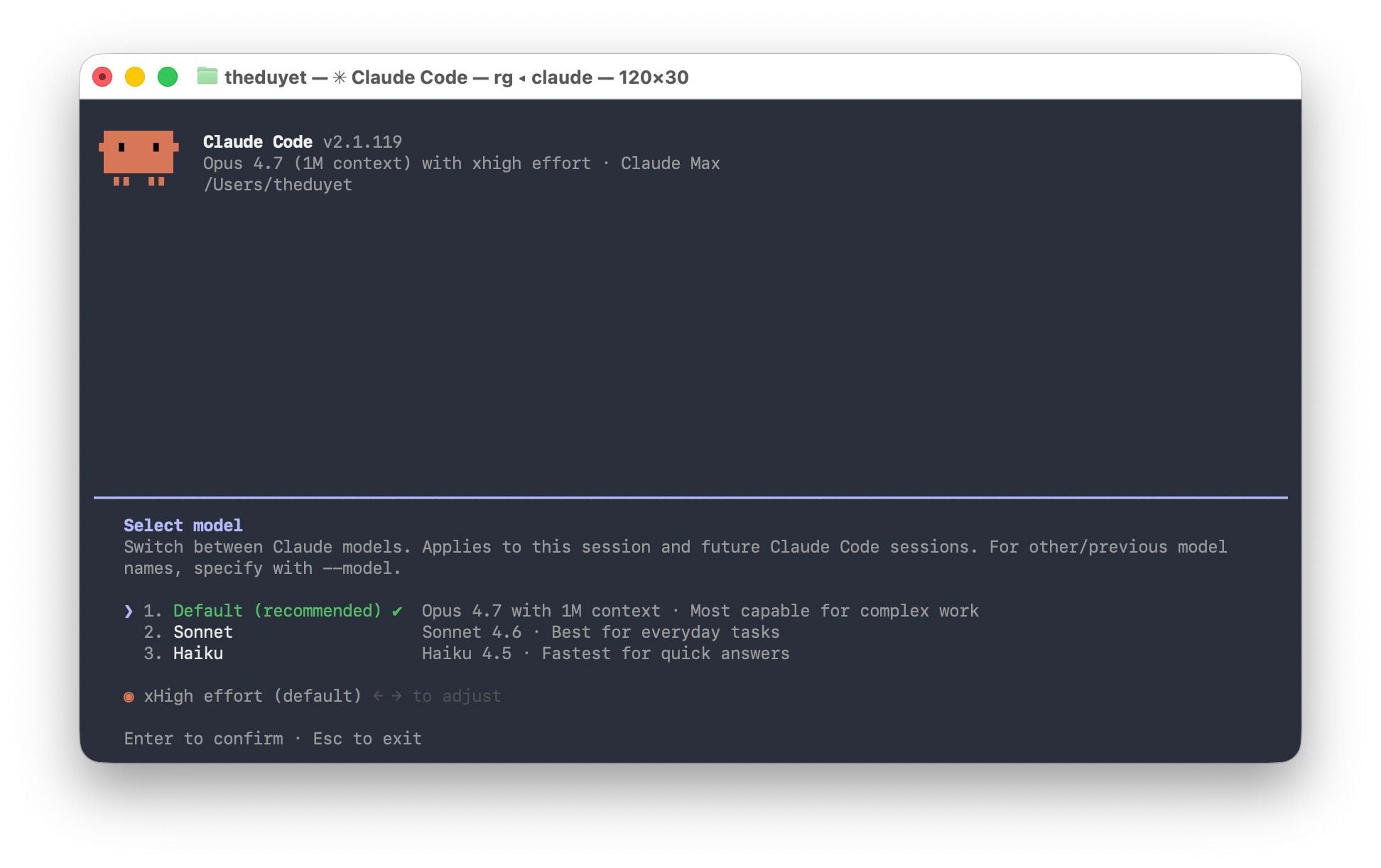

On March 4, Anthropic lowered Claude Code’s default reasoning effort from “high” to “medium”, citing reports that the interface could freeze while the model thought for too long. After community feedback that Claude Code appeared less capable, Anthropic reversed the change on April 7, restoring defaults to “xhigh” for Opus 4.7 and “high” for other models.

On March 26, an update intended to optimize the memory cache was installed incorrectly. Instead of clearing the session’s reasoning history, the faulty code deleted that history after every turn. The article says this caused Claude to forget why it had performed prior steps, leading to repeated actions, incorrect approaches, and increasing inconsistency on complex tasks.

The same issue also bypassed the memory cache, driving token costs higher than anticipated and exhausting usage limits faster. Anthropic fixed the problem on April 10.

On April 16, Anthropic added a system prompt intended to limit response length: no more than 25 words between tool calls and no more than 100 words for the final answer. The article states that internal checks initially did not reveal issues, but deeper reviews after deployment with Opus 4.7 found programming quality dropped by about 3% across both Opus 4.6 and 4.7. The directive was rolled back on April 20.

The article notes that the developer community detected problems early, but Anthropic initially denied substantive changes. Company staff repeatedly asserted that the model remained capable and that user complaints reflected interface changes rather than true declines in reasoning ability.

A turning point came from an anomalous report. On April 2, Stella Laurenzo, senior AI group director at AMD, posted a detailed analysis on GitHub based on 6,852 Claude Code sessions, 17,871 reasoning blocks, and 234,760 tool calls. The analysis concluded that Claude Code was no longer reliable for complex technical tasks.

Laurenzo said her team switched to another provider, warning: “Six months earlier, Claude stood at the level of reasoning and execution. Competitors must be monitored and evaluated carefully. Anthropic is no longer alone at the level Opus once occupied.”

As of April 23, Anthropic reset usage limits for all users to compensate for token waste caused by the issues described above.

For future safeguards, the article says Anthropic committed to requiring more internal staff to use the public Claude Code build rather than internal test builds, tightening the process for reviewing system prompts before changes, and opening an @ClaudeDevs account on X to communicate product decisions more transparently.

The incident, as described in the article, highlights a structural issue: changes at the configuration layer around the model—such as default reasoning level, memory cache management, and length limits—can affect user experience similarly to changes in model weights, but with less control and communication.

Premium gym chains are entering a “golden era” that is ending or already in decline, as rising operating costs collide with shifting consumer preferences toward more flexible, community-based ways to exercise. Long-term memberships are shrinking, margins are pressured by higher rents and facility expenses, and competition from smaller, more personalized…