•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

More than 560 Google employees have signed a joint letter urging Alphabet leadership not to allow the company’s AI tools to be used for covert military campaigns, arguing that such systems should benefit humanity rather than be used for “deadly or extremely harmful purposes.” The letter reflects a broader pattern of internal dissent among technology workers who say they are concerned about unethical or uncontrolled uses of advanced AI.

The Google employees’ letter states that “the understanding of technology demands we act to prevent unethical uses.” It follows earlier concerns raised by employees at Google, Amazon, and Microsoft, who worry that AI products could be used by the Israeli military to target Palestinians in the Gaza conflict.

The article also points to whistleblowing cases in the tech sector. It cites Frances Haugen, who previously exposed how social networks are designed to be addictive, and whose 2021 testimony before Congress later became a legal basis for lawsuits involving Meta and Google.

The letter comes as tensions persist between the Pentagon and Anthropic. In February, Anthropic refused a modified contract with the U.S. Department of Defense, according to the article. Employees and observers cited in the piece say they fear AI could be misused for mass surveillance or to develop deadly autonomous weapons.

In response, the Pentagon has listed Anthropic as a supply-chain risk and threatened to cut federal contracts.

In contrast, a pro-accelerate faction in Silicon Valley argues that restrictions are irrational. The argument presented is that if a company does not want its product used for national security, it should not have supplied it to the government in the first place.

The article says competitors including Google, xAI, and OpenAI appear ready to take over such work. It also notes that Google extended a $200 million contract with the Department of Defense to support covert military operations directly.

It further quotes Palantir’s position on X: “Our rivals will not pause to engage in dramatic debates about the merits of the technology. They will certainly continue forward.” The piece says these arguments would carry more weight if the Department of Defense were willing to learn from mistakes, but it describes the agency as delaying an inquiry into a February bombing of a school in Iran.

The article states that the February bombing killed 168 people, including 110 children. It says preliminary evidence suggests the school was struck by a Tomahawk missile launched by the U.S., potentially linked to mis-targeting aided by AI.

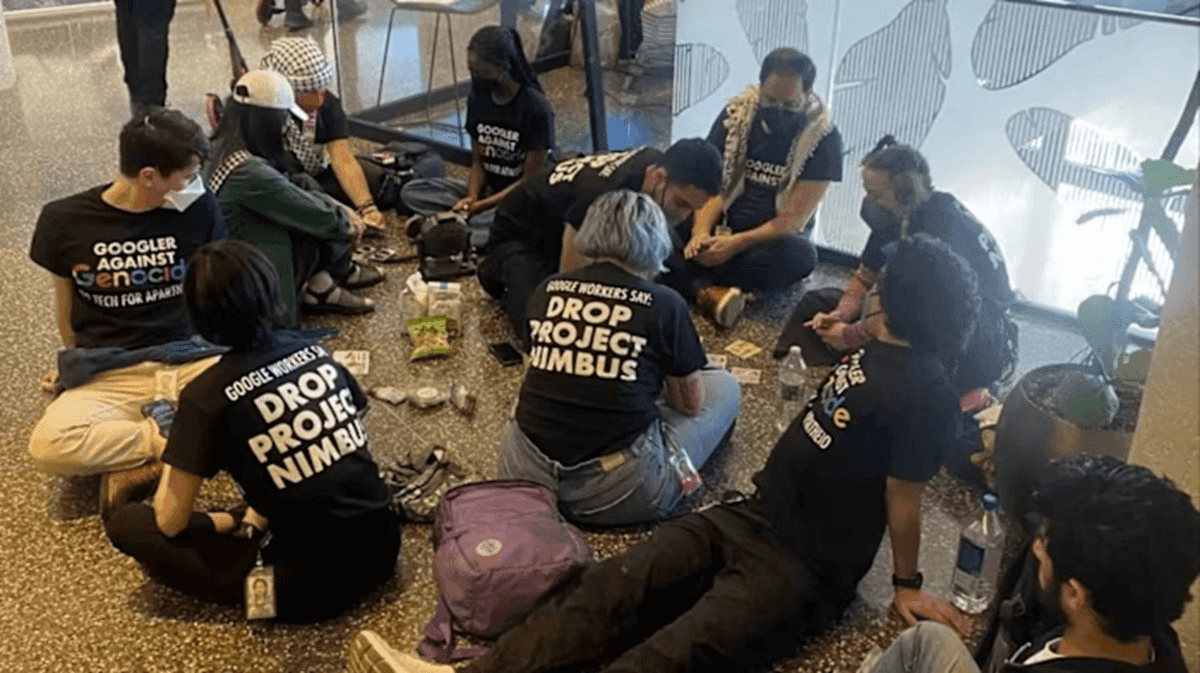

The article also describes how some tech companies have taken a tougher stance toward employee grievances. It says that in 2024, Google dismissed 50 employees for protesting the company’s cloud services being sold to Israel.

When workers leave, the piece says they are often required to sign strict non-disclosure agreements and pledge not to disparage the company or take actions that could damage its reputation. Against that backdrop, it characterizes the willingness of some employees to risk their careers and reputations to speak out as notable.

The article concludes that while workers cannot and should not be the sole policy-makers, companies’ boards still bear responsibility for setting core principles and managing trade-offs. It argues that if tech leaders want to defend democratic values, they should persuade employees with reasoned arguments rather than suppress debate, noting that employee teams are said to understand the capabilities, limits, and vulnerabilities of the AI they develop.

Premium gym chains are entering a “golden era” that is ending or already in decline, as rising operating costs collide with shifting consumer preferences toward more flexible, community-based ways to exercise. Long-term memberships are shrinking, margins are pressured by higher rents and facility expenses, and competition from smaller, more personalized…