•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

•

Artificial intelligence (AI) is developing rapidly and is increasingly becoming a strategic digital infrastructure for the country, raising growing demands for safety and security for AI systems and for the application of AI in cybersecurity operations.

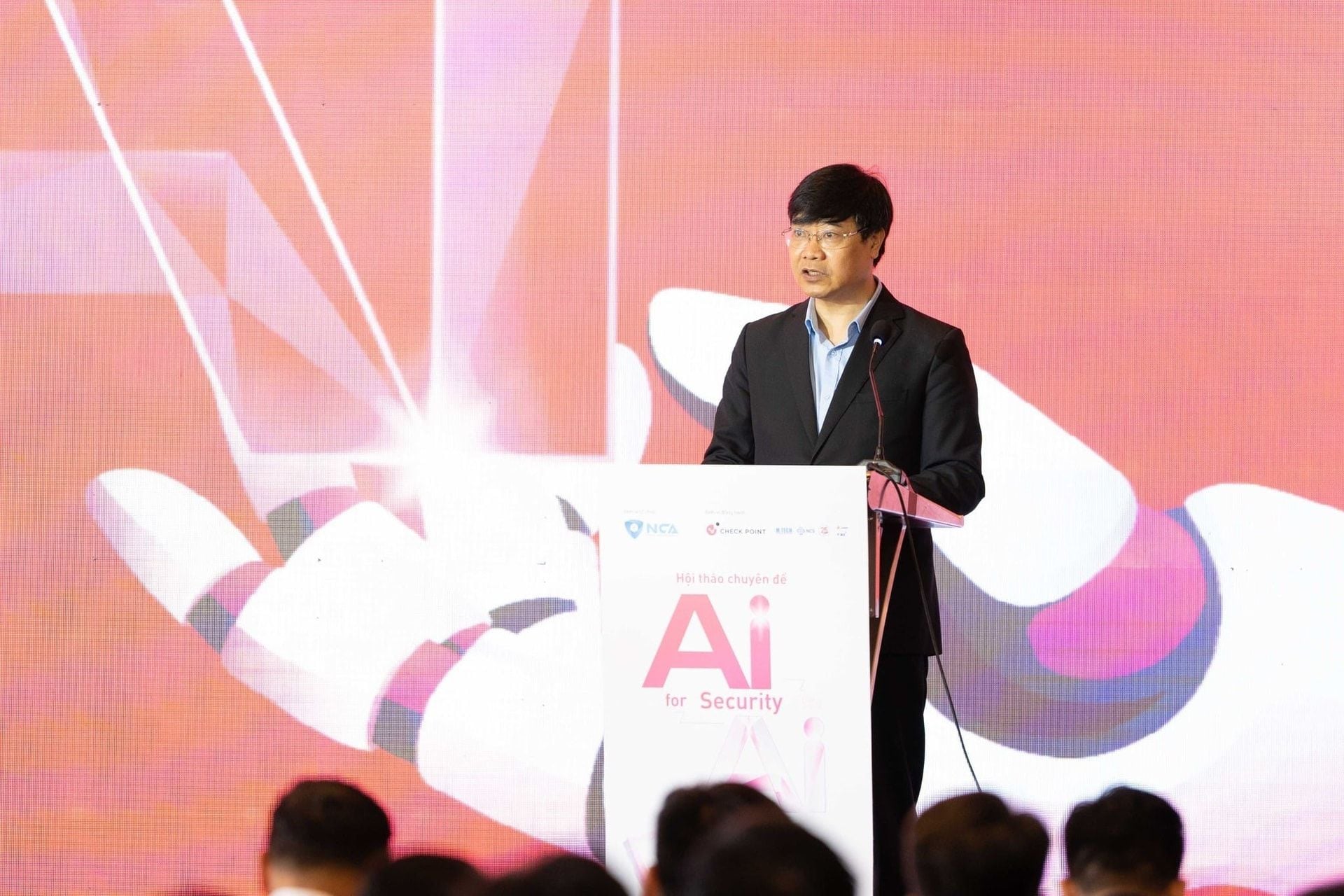

On 7 April in Hanoi, the National Cybersecurity Association (NCA) held the seminar “Security in the AI Era - Strategy to Shape the Digital Future” to create a forum for exchanging practical experience and promoting collaborative initiatives in cybersecurity and AI.

Speaking at the seminar, Col. Dr. Nguyen Hong Quan, Deputy Director of the Cyber Security and High-Tech Crime Prevention Division (Ministry of Public Security) and Head of the Data Security and Personal Data Protection Committee under the National Cyber Security Association, said AI is fundamentally changing the methods and nature of threats in cyberspace.

In his view, cybercrime has shifted from relying mainly on traditional techniques and tools to becoming more automated at scale with AI support, enabling attacks with unprecedented sophistication. He noted that fraud is increasingly personalized, deepfake technology is harder to detect, and malware can adapt to evade defenses.

Col. Dr. Nguyen Hong Quan also highlighted that cybercrime is moving from individual operations to organized professional models, taking on systemic characteristics and trending toward “industrialization.” AI can also shorten the preparation, deployment and expansion time of attacks, turning cyberspace into a dynamic battlefield where offensive and defensive activities occur continuously at increasing speed.

“Realities in Vietnam show these trends are no longer potential risks but are now clearly present,” Col. Dr. Nguyen Hong Quan said. He cited cases including fraud via fake messages and brand impersonation involving banks and apps to seize assets, as well as calls using deepfake technology to imitate images and voices to build trust and request money transfers.

He added that perpetrators also impersonate authorities and use sophisticated scripts to extract personal information and apply psychological pressure on victims, demonstrating tighter integration between technology and behavioral psychology.

From a national security perspective, Col. Dr. Nguyen Hong Quan said AI creates strategic challenges including data sovereignty protection, dependence on foreign platforms and technologies, and the need to safeguard AI systems themselves, which are increasingly targeted for attacks.

He emphasized that these challenges are not only technical but also institutional and policy-related, affecting each country’s capacity for self-reliance.

“AI is not only a tool, but is becoming a factor that reshapes cyberspace and national security. Proactively mastering and safeguarding AI technologies will be a decisive factor for the sustainable and safe development of each country in the future,” he said.

From an international security perspective, Ms. Ruma Balasubramanian, Chair of Check Point Software Technologies APAC and Japan, said AI-specific threats today can be categorized into three types: data leaks; command-based attacks (inputting improper commands); and process interference.

She noted that these threats are larger in scale and faster than traditional threats such as email.

To counter them, Ms. Balasubramanian recommended that enterprises establish a Zero Trust model and ensure that AI actors have minimal privileges when accessing data.

Despite AI’s advanced data processing capabilities, experts at the seminar said it cannot fully replace humans in cybersecurity.

Mr. Vu Duy Hien, Chief of Office and Secretary General of the National Cybersecurity Association, said AI can shorten data analysis time from weeks to seconds. However, he added that strategic decisions and context related to politics and ethics still require real-world experience.

He also stated that AI cannot understand an attacker’s deeper intent or be accountable for its decisions.

Mr. Vu Duy Hien further warned that misuse of AI in daily life can pose risks to independent thinking, particularly among youths. He said building a safe AI ecosystem must start with education, improving policy frameworks, and strengthening risk governance from the design stage.

Premium gym chains are entering a “golden era” that is ending or already in decline, as rising operating costs collide with shifting consumer preferences toward more flexible, community-based ways to exercise. Long-term memberships are shrinking, margins are pressured by higher rents and facility expenses, and competition from smaller, more personalized…